Free Delivery Across UK on all Orders | 100% Secure Ordering | 4 Step design check before printing | Award Winning Support

Email: sales@nearprint.co.uk

- Home

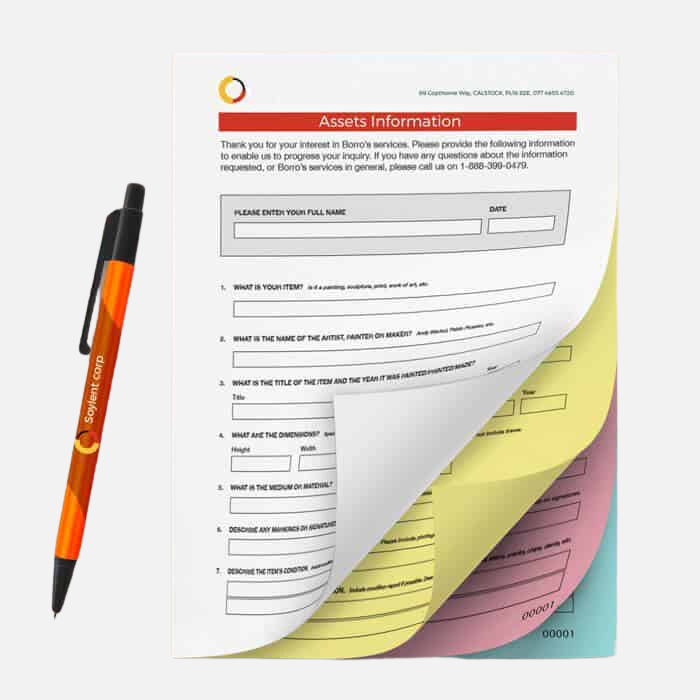

- NCR PrintingOffers

- Business Cards

Business Cards Design Template

Standard( 85mm x 55mm )

Mini( 85mm x 25mm )

Square( 55mm x 55mm )

- Stationery

- Recycled

- Flyers

- Banners

- Envelopes

- Promotional

NearPrint.co.uk

Near Print is an online print company which helps businesses in the UK with physical and digital products.

Monday to Friday 9am – 5:30pm

Call us: 01604 31 2446

Email: sales@nearprint.co.uk

..............................

Registered Address:

128 City Road, London, EC1V 2NX

Useful Links

Latest Posts

© 2023 NearPrint is trading name of Bizztor Limited. Registered in the UK. All rights reserved.